I switched from Cursor to Claude Code and got stuck at 16% utilization

I thought I overpaid for Claude Code. I started a journey that taught me about different pricing paradigms and how to increase my token utilization effectively.

While tinkering over the holidays, I remember thinking: "This is so strange! I was easily reaching $350 of Claude tokens in Cursor for the month. After switching to Claude Code, I was barely making it past 16% in Claude Code's 5-hour sessions. Comparing Claude vs Cursor's $200 plans, I was doing the same work, at the same velocity. Yet I'm experiencing totally different limits."

Given my ops and scaling experience, I'm mindful of how much it costs to operate software. So I obviously couldn't leave this alone! This journey started out with me worried I had overpaid for a $200 plan but ended up leading to a significant acceleration of my workflows as I tried to make full use of my Claude Code allotment.

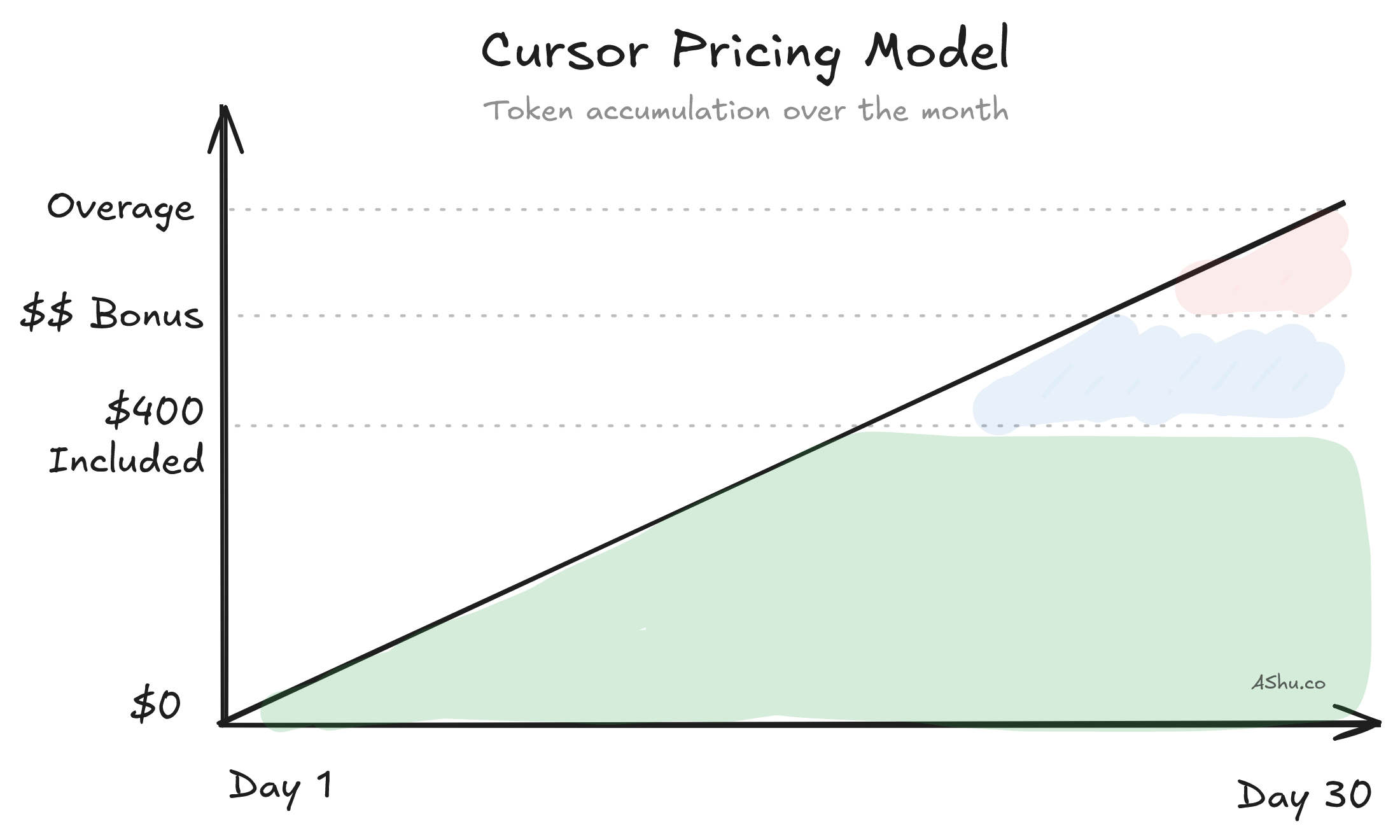

From Cursor to Claude Code: monthly token counter vs 5-hour speed limits

Within 15 minutes of using Claude Code, I realized that I was going to need much more than the Pro plan ($20/month). I started with the smallest paid plan to feel where the limits are. This threshold was initially a shock to me, since my mental model of "token limits" was still based on Cursor's monthly window.

At the time, it would take me a few days to use up Cursor's tokens. But with Claude's 5-hour cycle, you get fast feedback that the Claude plan you've chosen is too small. So to reframe my observation: it was not that I had "used up all the tokens for the month", but that I was using tokens at a much faster speed in this 5-hour session than was supported by the plan.

Given how fast I had hit my "$20 Pro Plan" limit, I assumed that I wouldn't need to try out the middle Max 5x plan and just jump up to the $200/mo Max 20x plan. (Anthropic only charged me the prorated difference, so it was easy to upgrade).

I assumed I was going to hit 80-100+% utilization like I was in Cursor, but I was wrong. Imagine my surprise when, after a day or two of coding, I realized that I never hit anywhere close to the Max 20x plans' 80-100% utilization!

Did I overpay for Claude's Max 20x Plan? No, but I needed to learn how to use it.

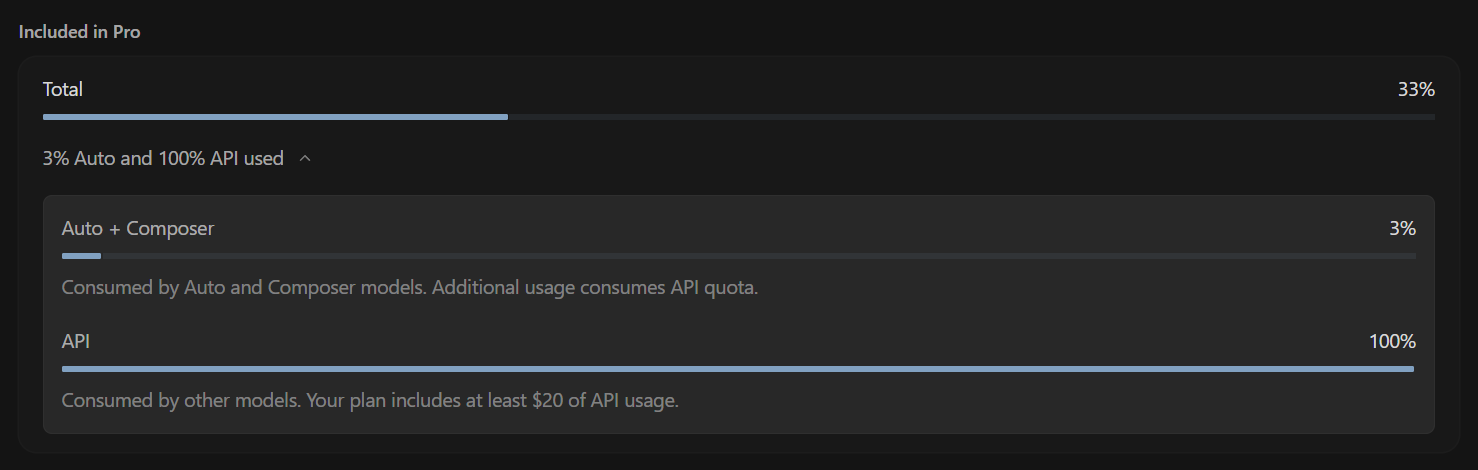

After using Claude Code for the first 2-3 sessions, I noticed I was only using 6-12% of the 5-hour usage window in each session. Thus I was only using a fraction of the $200 I spent. This was a surprise! I was doing the same coding workloads on Cursor and Claude Code.

Having such low Claude Code utilization was great, because it meant I could code more and spend less money! But it bothered me on two levels: firstly, could I have gotten away with paying less. Secondly, how were people hitting 100%? Not just 100% – I was reading online about people using 2-3 Claude Code accounts.

My goal wasn't to maximize token spend or get to the top of the leaderboard. I was puzzled and bothered by this underutilization. So, the first thing I did was to set up structured markdown plans to launch longer-running agents that made full use of the 5-hour session. This let me confirm that tasks were reasonable, and I was unlikely to need to pause Claude's work to answer questions and troubleshoot.

After a focused few high-usage sessions, I realized I could push my utilization to 14 - 16%. And that seemed to be the ceiling.

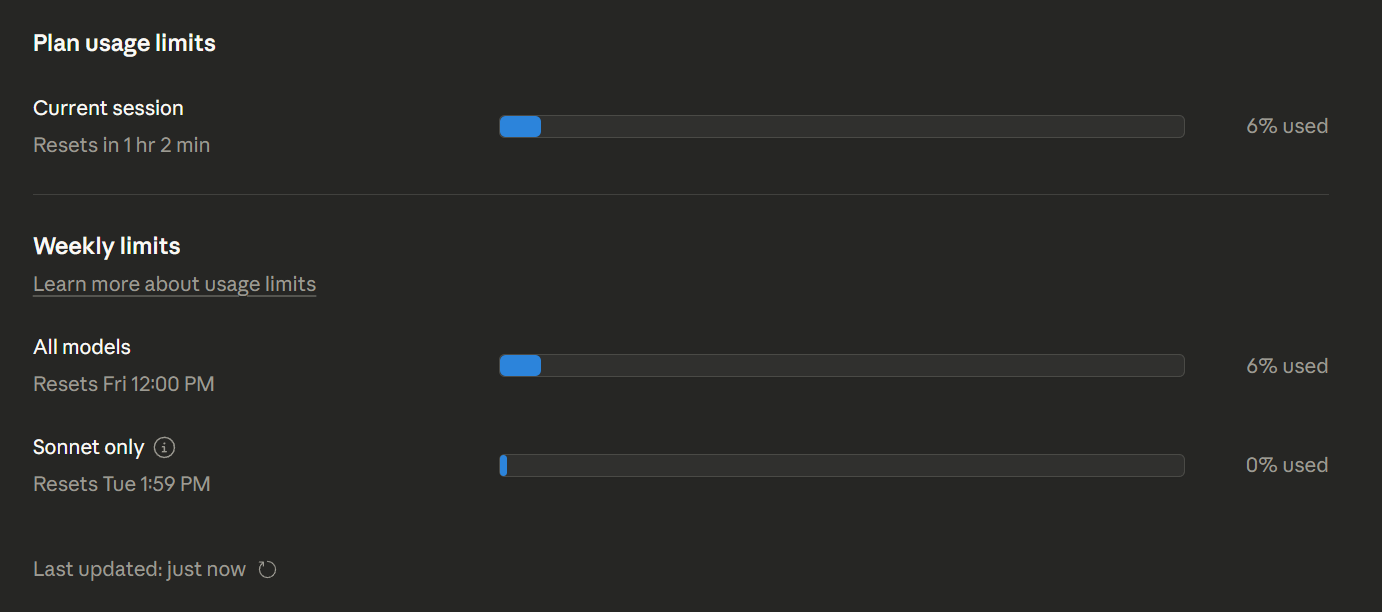

Claude Code's 5-hour sessions are a "use it or lose it" rate limit that spreads out usage

Let's dive into Claude Code's rate limiting system. The "5-hour usage" windows functions as a "speed limit". What this practically means is: you'll figure out your "speed of token usage", and calibrate your plan accordingly.

So when we compare Cursor vs Claude Code's pricing models, Cursor is billed by monthly total tokens consumed. That means I could leave Cursor untouched for 29 days, then use up all my tokens in the 30th day. (I assume there is a rate limit for extremely bursty token usage in Cursor, but I've never hit it.) It also means that comparing Cursor to Claude Code licensing is an apples to oranges comparison.

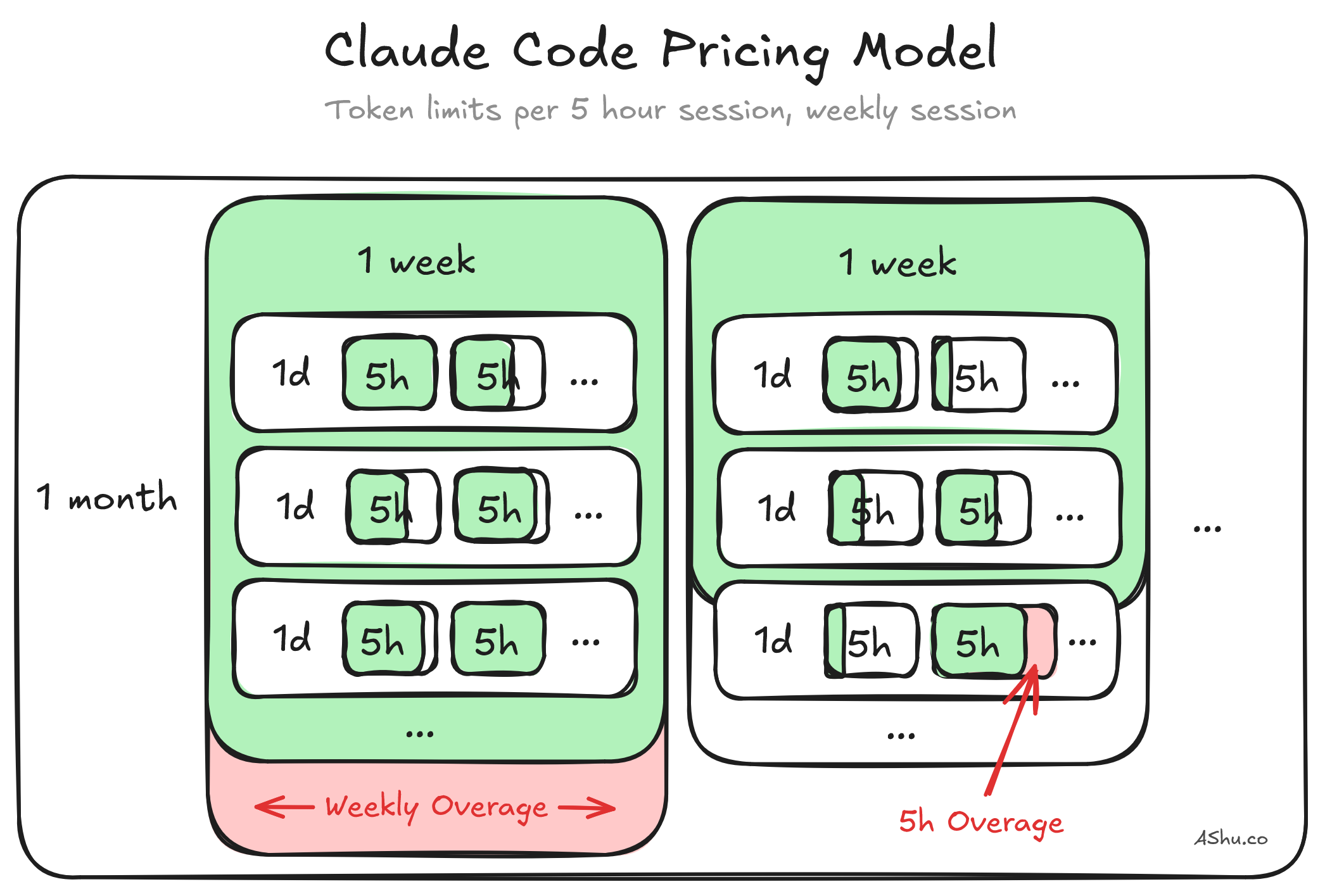

Claude Code also has "weekly limits" that are a second layer on top of the 5-hour limit. Imagine if you maximized the 5-hour limit, 24 / 7; that could get extremely expensive for Anthropic. So, Anthropic sets an upper limit for sustained usage over the week. If we reflect on pricing design, they could have set it at a 1 month limit. But by setting it to be weekly, you must utilize your weekly limits, because it doesn't roll over to the next week.

So the 5-hour limit is a "burst speed limit", and the weekly limit is a "sustained usage limit". The 5-hour window smooths out utilization across the day, and the weekly window smooths it out over the month. Technically you can miss a few 5-hour windows, and you can make it up later in the week. But if you don't use it for the week, you don't get to make it back up. Since tokens don't roll over from week to the next, you use it or you lose it.

This isn't a bad thing. Most engineers are probably doing work spread out over days and weeks. Claude Code's system is a fair agreement for typical engineering work, that makes better use of compute resources and the effort you put in writing code. If you want higher levels of usage on either plan, you're an advanced user and you need to pay API usage (i.e. higher) token costs.

Another way to look at it could be: the 5 hour session roughly maps out to a 10 hour workday, and then utilization assumes a ~40-hour work week of utilization. So some of the windowing and upper limits may make sense from this lens, and may not make sense if you're trying to utilize your license with a 24 / 7 weekly schedule. I haven't explored the math and logic behind this framing, but wanted to share it as a thought experiment. So take it with a grain of salt.

So did I get past 16% utilization in Claude Code?

After all this, I was still stuck at 16% utilization. I understood why: the speed-limit system means that a single coding session with a single agent has a natural upper limit. No matter how focused I was, one person directing one AI agent can only consume tokens so fast.

And that raised the obvious question: if one agent tops out at ~16%, and people online are hitting 100%+ across multiple accounts... they must be running agents in parallel. This meant I needed to figure out how to coordinate multiple AI agents working on the same codebase without them stepping on each other's toes.

To coordinate parallel agents, I had to rethink how I broke down projects. It also led me to git worktrees and additional changes in my local development environments. I'll cover that in my next post, and describe how it took my utilization from 16% to 50%+.